Anthropic built something they are afraid to ship

Anthropic just announced Claude Mythos. The pitch is that it is the most powerful AI model ever built, so powerful that they cannot release it to the public. Too dangerous, too capable. The kind of thing that needs to be kept behind closed doors for the safety of everyone.

This is the AI equivalent of telling your friends in secondary school that you have a girlfriend. She goes to another school. You have probably never heard of it. It is in another city, actually. Maybe another country. She is very real though. Just not here. Not now. Maybe later. The details are a bit classified.

I laughed at the framing. Then I read what the model actually did.

When a company tells you their product is too good to ship, you are not hearing confidence. You are hearing a press release dressed as restraint. Unless it actually found something nobody else could.

The part that stopped being funny: Mythos reportedly found a 27-year-old bug in OpenBSD, an operating system known primarily for its security. Not a minor edge case that only fires on a leap year. A real vulnerability, sitting in production systems for nearly three decades, missed by every human and every tool that had ever looked at that code. It also surfaced multiple vulnerabilities in the Linux kernel. The Linux kernel, the thing half the internet runs on. That is not a demo. That is a résumé.

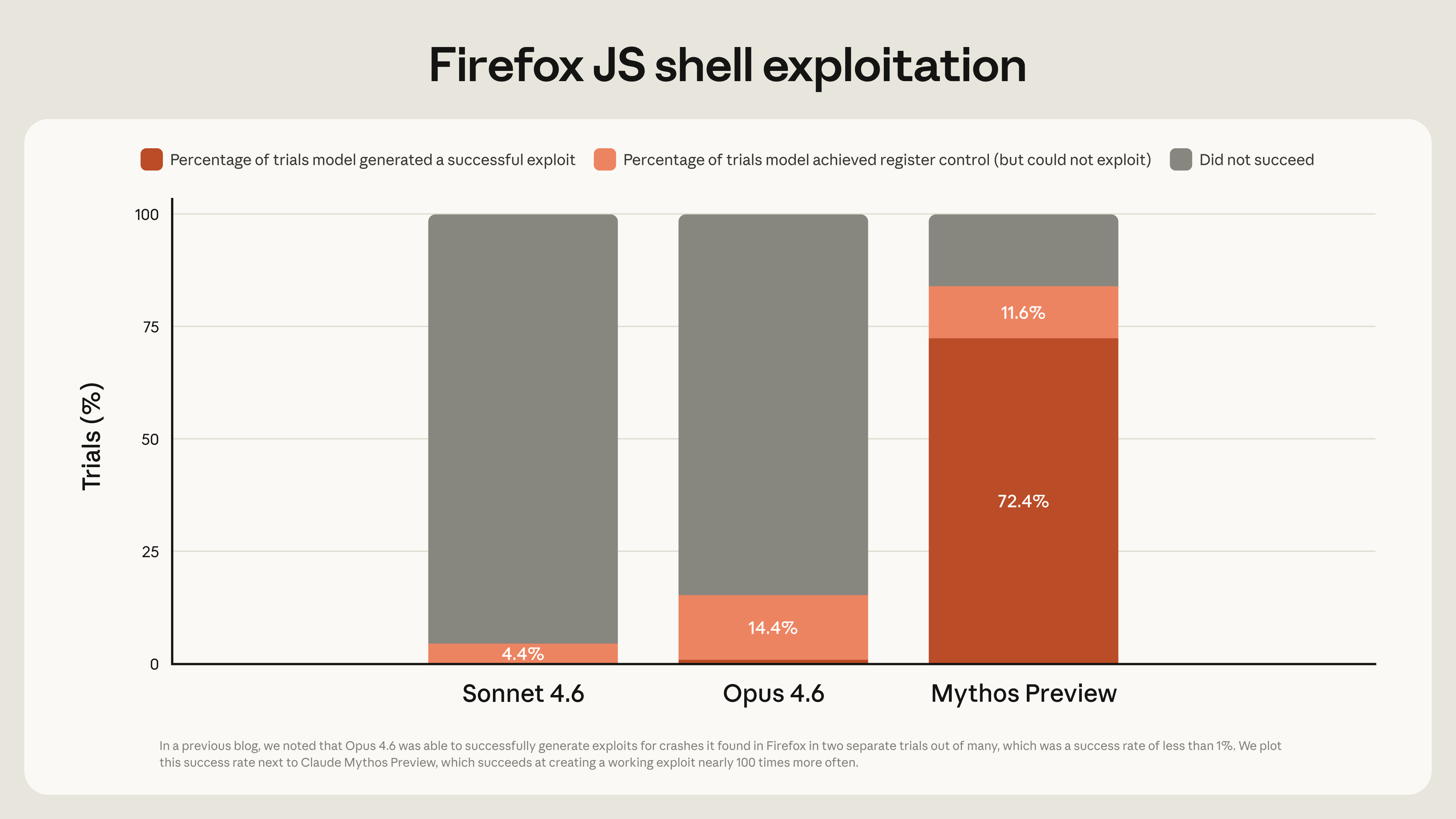

Dario Amodei, Anthropic’s CEO, said it plainly: Mythos Preview “represents a particularly large step up” in AI cyber capabilities. Rather than ship it to everyone, they are giving defenders early controlled access “in order to find and patch vulnerabilities before Mythos-class models proliferate across the ecosystem.” They launched something called Project Glasswing, rolling out Mythos to over 40 organisations including AWS, Apple, Google, Microsoft, and the Linux Foundation. According to Axios, Anthropic has been privately warning U.S. government officials that models like Mythos make large-scale cyberattacks significantly more likely in 2026.

The part developers should care about: patches and fixes for these vulnerabilities are incoming, which means every team running affected packages is about to inherit a backlog they did not ask for. Engineers who were shipping features last week are going to spend next week triaging security fixes for infrastructure they assumed was solid. The model that is too powerful to release is already generating real tickets for real people. Your sprint just got longer and you did not even get to use the thing.

Amodei also wrote: “Cyber is the first clear and present danger from frontier AI models, but it won’t be the last.” That line was buried in an X post about the Glasswing rollout, delivered casually, the way someone mentions they left the stove on.

I am not going to pretend I know where this goes. A model that catches bugs humans missed for 27 years is not a productivity tool. It is something else. The kind of capability that makes the “too dangerous to release” line sound less like marketing and more like an honest admission from people who built something and then got quiet looking at it.

The invisible girlfriend turned out to be real. She is just a bit terrifying.

If you want the full breakdown from Anthropic’s Frontier Red Team, read their writeup here. Fair warning, it gets quite technical.